Templates Community /

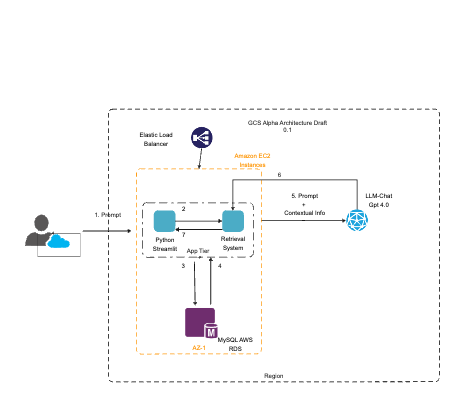

Cloud-Based Chat Application Architecture Diagram

Cloud-Based Chat Application Architecture Diagram

WSKRQoTe

Published on 2024-01-09

This diagram presents the architecture draft (version 0.1) of a cloud-based chat application, titled 'GCS Alpha Architecture Draft'. It illustrates the components and data flow for handling user prompts and delivering responses using the LLM-Chat Gpt 4.0 system. The process begins with a user prompt (1), which enters the system through an Elastic Load Balancer, suggesting the use of AWS services. The balancer distributes the load to multiple Amazon EC2 instances (2), ensuring efficient processing and availability. The application layer is built on Python with Streamlit, an open-source app framework (3), which communicates with a Retrieval System (4), indicating a microservices architecture. The retrieval system interacts with a MySQL AWS RDS (Relational Database Service) instance (5), located in an availability zone (AZ-1), ensuring data persistence and scalability. The numbered arrows suggest the sequence of operations, from receiving the prompt to delivering a processed response back to the user, incorporating contextual information into the dialogue. The entire architecture is encapsulated within a dashed line, indicating it operates within a single cloud region for regulatory or performance reasons.

Tag

AWS Infrastructure

Chatbot System Design

Cloud Computing Architecture

Share

Report

0

49

Post

Recommended Templates

Loading

Desktop

Desktop